| This is a decade-plus delayed follow-on to my original investigation and writeup on DMX signals and waveform characteristics, as observed over varying pathologies of RS485 network cabling for lighting control. I didn't have a camera handy back in '04 and thus never captured any oscilloscope shots, relying only on text descriptions of signal degradation. After acquisition of a DMX splitter of my own, it was appropriate to revisit that test setup and present the results in a more visual way. |

|

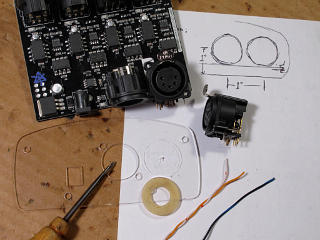

The splitter in question is the Enttec D-Split, a relatively recent arrival on the market at a significantly lower price point than traditional products. It's small; it's the little board out of its case and naked on the cardboard near the right edge of the picture. It's driven by my old but still good HogPC setup, with a show file loaded that provides 512 individual DMX channels that display in their decimal output values rather than percent. This allows sending precise values to any given address or "slot". |

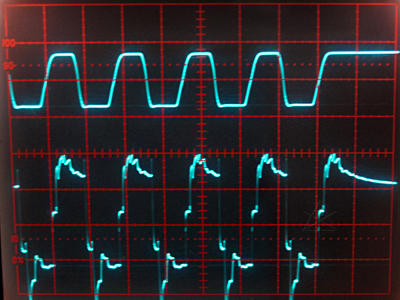

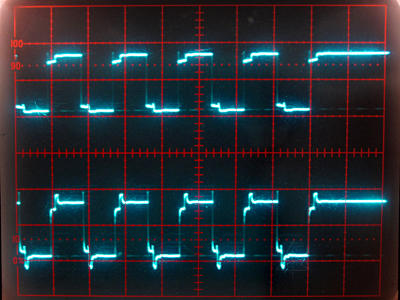

| For this testing, value 85 was sent to all 512 channels. Again, that's 0x55 or about 33%, giving a worst-case alternating bit pattern to show, among other attributes, the minimum time necessary for a signal to settle down and be properly read by a receiver. The scope was set up for about a 5 microsec/div sweep and triggered from the negative-going start bit of each value, which would reset during the inter-byte time and yield a nice jitter-free trace of every byte going by. Voltage scale was 2 V/div. Generally the positive network line was read; the negative side is simply the inverse of that and generally affected the same way by changing network topologies. Each test-point was separately grounded to the scope; the splitter does indeed have four fully independent outputs completely isolated electrically from each other and the input with their own separate reference grounds as well. While signal shields might end up essentially tied to each other through a rig of fixtures powered from a common PDU, they don't have to be and the isolation helps protect against ground-loop faults on the control side. |

| Maxim hosts a good description of slew-rate limiting, signal bandwidth, and their wide range of products to best match the intended application. While slew-rate limiting definitely helps keep signals cleaner over bad wiring it isn't strictly necessary for well-built DMX, which we'll clearly see as we go here. The next step would be to hook up some cables, for waveform propagation and distortion testing. Here's the basic setup. |

|

|

The "driver" could be any DMX sender, be it the Hog widget itself or one

of the Enttec outputs.

For many of the cable tests, a tap was taken from the direct Hog output

as the trigger reference on the upper scope channel.

Since I was already familiar with the Hog transmitter's behavior down

different cable setups from before, I was mostly interested in sending

the Enttec output into various configurations to record how all those rich

harmonics would react to proper or pathological conditions.

A fast, edgy square wave like that is far more likely to have transient

effects on a transmission line.

In general I had one test-point right at the beginning of the run near

the sender, and another farther along some length of cable.

For this batch of testing I used my existing stranded-CAT5 cables exclusively, since they are well-proven to work and the comparative exploration of "crappy mic cable" had already been done before. I chose a couple of 50-foot jumpers as the first leg, to reach an arbitrary point that could either be the far end of the line or become a "midpoint" with another 200 feet optionally daisy-chained on. The total length was roughly representative of the rigs we routinely run, but with minimal connectors and no actual receiving devices on it in this case. I could terminate the line at either remote point or leave it open, to observe signal propagation and reflection along different total lengths. The Hog's own signal under similar test conditions is reviewed farther below, just for completeness. |

Ring, ring ...

|

The short-duration events are interesting. They

may be explained by the fact that the cable is a twisted pair and being

driven with small and supposedly equivalent opposite currents, which

should cancel any magnetic fields around or along the pair.

The driver chip doesn't switch each side *exactly* at the same time and

possibly not at quite the same rate of current change for positive-going

or negative-going edges, so the wire could either be storing and releasing

just a tiny bit of magnetic energy during the transitions or coupling

pulse edges between the two wires.

I also had the cables rolled up and stacked, which may have contributed

to effectively being a big air-core coil for just a few nanoseconds.

Hard to tell, but anything that brief doesn't really matter for all this.

What matters is getting things under control by the middle of each bit-time

when the sample is taken.

Now I kept that test-point where it was at the end of the first 100 feet on the Enttec output, and added 200 more feet of cable downtream. Termination would either be present or absent at the ultimate end of all that, 300 feet from the transmitter. |

|

Upper:

Hog reference

Lower: Midpoint of long cable, open at far end. Ick! |

Source termination

|

Next was to look at what happens when a signal source has its own

termination at the origin point. The Enttecs run "open", e.g.

without anything across their own transmit leads, whereas the Hog

has 120 ohms across its output. The industry is generally

unclear on what the right thing to do here is, in fact. The

general RS485 spec calls for 120 ohm termination at "each end" of a

given bus, and in lighting we generally assume that a console is going

to always be at one of those endpoints driving the whole rig.

But technically the console is just one of several nodes on a network, and any transmitter can properly be at any point along it. Thus, in the proven *absence* of source termination in a console, its output could legitimately go into a short "Y" cable at its output and head off in two different directions, terminated at the end of each of those legs. That would totally meet the RS485 spec and be a "poor lampy's 2x splitter" without needing any active components. But if the console/transmitter has the 120 ohms across itself internally, that would have to be removed to make such a network robust. At least that is easy to determine up front, with the console powered down and a simple ohmmeter test across pins 2 and 3 of its output. Anyway, given that the Enttec outputs run open I wanted to see the effects of *giving* them source-termination at the head end. |

|

Upper:

Enttec output at headend, into open long cable

Lower: Midpoint of same long cable |

|

Upper:

Source-terminated Enttec output at headend, "happy light" terminator

at far end

Lower: Midpoint. Also compare to similarly terminated midpoint without the source termination, above |

|

Once the long cable was terminated, I could see that all the

source-termination really does is lower the overall amplitude of the

signal without changing its appearance much. This supports the

output topology evolution I had observed in the Fleenor splitter

previously -- they'd eliminated any resistors they'd had in the design,

either across or in series, and simply pumped the transmitter leads

from the chip right into the wire. Existing products began getting

production ECOs to remove any "impedance matching" networks at transmitters

and all new products since don't have them, because they turn out to simply

not be necessary. Output signals hitting the wire are still

completely within RS485 spec and only benefit from having somewhat

stronger amplitude and current-sourcing capacity.

Note that in the next evolutionary step when RDM or other bidirectional communication needs to be supported, things get far more complex as each head-end source *does* need termination for data sent back from downstream. And splitters need to know how to pass *and arbitrate* that from their own outputs. There are several reasons the industry seems to be moving toward straight-up packetized Ethernet instead... |

The Pig very softly says "oink"

| Next was to lay the Enttec aside for a moment and take a review look at the Hog output behavior under the same test conditions, just to recall how the slew-rate limted Maxim chip helps everything by getting rid of all those nasty harmonic edges up front. |

... But I'm rather biased

|

On looking more closely at the impedance characteristics of the Enttec

I found that it seemed to have a fairly low passive resistance to ground

from one of the signal pins, and when powered up seemed to hold its own

input pins fairly firmly around 4.6 and 0.5 volts instead of being the high

impedance I might have expected. Why so, on a *receiver*?

This needed further investigation.

Production folks frequently mention termination as a necessary part of any well-constructed control network, but what they rarely if ever talk about at all is "failsafe bias". If nothing is actively transmitting onto a network bus, all the transceivers stay tri-stated to high impedance and the line is effectively open at a zero-volt differential. This is technically an indeterminate state, and adding a little noise on top of that can make some narrow-threshold receivers "go nuts" and chatter to produce a stream of random bits -- likely to make some lighting fixtures start wigging out in an uncontrolled fashion. To guard against this, resistors are added to gently "pull" the idle network voltages apart toward their mark state, which any transmitter can then override when needed. These would properly be connected to some node's +5 power supply and ground at ONE point in the network, which despite what this example shows doesn't have to be at the same place where the termination resistor is.

With two terminators present on a line and the limit of 32 "unit loads" at 12Kohms each, the math works out that biasing through 720 ohm resistors will pull the signals just far enough apart to be outside the spec +/- 200 millivolt "maybe" deadband and assert a solid mark state or "1" bit. So in a lighting rig, which device is responsible for providing this?? Almost unbelievably, there is NO standard answer to that question. We might expect the console to handle it by always being in transmit mode and thus rendering bias a non-issue, except then what if the console is disconnected or powered down with the rest of the rig still active? That happens all the time in real life. But if *every* receiving device tried to apply such a line bias on its own, the network would wind up so loaded-down that nothing would be able to communicate at all. So this remains one of the great unanswered questions around the industry, and largely ignored by most on-the-ground technicians when stuff "just works". Usually an open DMX line will stay quiet enough that the receivers on it will go to either a "1" or a "0" state and stay there, so we generally just get lucky. However, if the input to a *splitter* feeding many more downstream devices begins chattering up and down and sending random data onward, that would be (bad * N) active branches. So the Enttec designers apparently assumed that they are responsible for providing full-load-capable bias on that leg just to make sure its own input stays in a predictable state. |

Let my signal go

| I pondered a bit on how to fix the termination problem. Simply living with it as shipped really wasn't an option, given what I now knew about it. I could simply terminate the input internally and warn fellow techs about that, at best restricting splitter placement at the end of a run. Or I could do it right and find a female connector to occupy the fairly generous amount of space at the corner of the board. That's what I decided to opt for, and ordered the appropriate parts from Full Compass. At the same time I decided to flesh out my stock of 5-pin XLR in general, mulling the idea of building a few new cables, and threw a few of the new Neutrik "XX" series in-line heads onto the order as well. They've made some minor improvements on the classic shell and strain-relief design. | |

|

The socket would certainly fit in, it was just a question of mounting and connection. A few measurements revealed the interesting fact that the centerline of the new hole had to be exactly an inch from the other one and an inch up from the bottom of the transparent cover plastic. [Odd choice of materials, I must say.] This "serving suggestion" shot shows rough fitment, the washer of an appropriate diameter I used to guide scribing the necessary cutout, my quick drawing of the operation, and the wire I'd use for any interconnects. |

Feedback fun

| At some point during all this I happened to think, okay, what happens if you feed an output back into the input, but inverted? With these test-breakouts available that was easy to set up. A splitter output was sent back around to the input jack, but wired 2 to 3 and 3 to 2 and another output scoped. The somewhat amusing result came immediately upon plugging the loopback in -- as the device is just a dumb repeater it simply oscillates, screaming along at about 5 MHz. I might have gotten it to go a little faster by using shorter test clips or a special shielded jumper, but I already had enough of an answer by then. |