Now what?

|

A friend had given me a big ol' rackmount APC Smart-UPS 1400XL about a year

prior to this, and I went out and bought it a new set of batteries to

restore it to service [which is usually all a "dead" UPS ever needs].

This is a 48V system, using four of the large 20-Ah sealed lead-acid bricks,

and in this particular model is engineered more for long runtime than high

power. Fully assembled, it weighs close to 100 pounds and is a real joy

to move around and work on. A necessary step, however, was getting in

there to desolder and and completely remove the annoying little beeper

inside which involved a fair bit of disassembly. The fan in this unit is

loud enough -- the last thing anyone needs when running on battery and

wondering when commercial power will return is something going "meep,

meep, meep, meep" every minute or so.

After getting it back together and working and playing around with a little charge/discharge cycling and load-testing, I brought it to Arisia '11 for the video guys to run their switchers and content-servers from so that if we did have a power problem in the ballroom, we were less likely to interrupt the running TV feed into the hotel's headend. This in fact came in very handy at strike, as when we needed to take down all the temporary power they were able to transition over to local wall power without a break. I brought it back home after that and more or less put in storage for the remainder of the year, and around the time of the next haunt thought that it might be a good backup supply for some of that setup, so went to fire it up and make sure it was charged. With typical APC units, there's a trick called cold-booting where holding down the "on" button for a couple of seconds and letting go will allow the unit to start up on battery without needing to be plugged into line power. Usually doing this elicits various LED flashing on the front panel and relay clicks inside. As does plugging it into the wall, where incoming power enables various parts of the electronics too. This time, all I got was the briefest, barely noticeable blip in the panel LEDs, only once, and then nothing. Zip. Plugged into the wall or not, the thing remained totally stone dead. Usually when it's plugged in one can hear a low hum and the battery gets charged/maintained, even if the unit isn't officially "on" and powering a load. But this one had gone completely tango-uniform catatonic on me, when it had been working fine at the last time it was powered off. Damn. I just *had* this thing apart, I didn't want to have to dive inside it again. First thing to check, of course, was the pack voltage: 52 and change -- still full after sitting around for several months. With batteries less than a year old, there was no reason the pack should be in question; something else was wrong. The tiny blip I had observed was likely the last gasp from internal power-supply caps that still had some charge, which gave me one or two diagnostic tips up front -- for example, that the front panel buttons themselves were still working and that the processor may have been trying to boot. The wall-power input breaker wasn't tripped, and in fact the little zap any time I'd go to plug it in confirmed that and told me that *something* inside was drawing a little power. [Small pix link to large ones; use them for more detail.] |

|

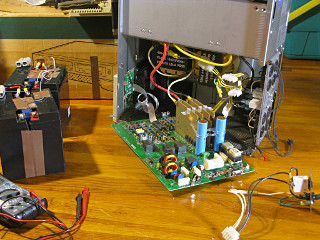

I should mention that every SmartUPS-class unit I've worked on has been

a pain in the ass from a physical standpoint, as APC tends to hardwire

a lot of the heavy interconnects without much of a service loop and once

the cover is off, there isn't much extra length to allow repositioning. I

could see the value of direct wiring on high-current paths but FFS do we

really need to limit the wires from the inverter heatsink to the main

transformers to only six inches?? On this one I managed to turn the board

out 90 degrees but clearly it would need to sit across the metal edge of the

case with an insulating pad underneath since I couldn't pull it out any

farther. No user-serviceable parts inside becomes an issue of no

*technician*-accessible parts inside when stuff is put together this way.

Especially when you're going at this without benefit of a schematic.

I called APC, and after getting the useless people in the Phillipines to finally send me back to the Stateside support office, learned that APC is now part of Schneider Electric -- a company based in France. How profoundly ironic, that *American Power Conversion* is now owned by the French and has outsourced its support to Asia. Nothing American about it anymore, except that they still have their offices in Rhode Island. Where's our freakin' national pride anymore? Burned up in corporate profit-mongering, that's where. Long, painful phone-tag story short I finally managed to talk to a fairly helpful domestic tech who reviewed the "braindead reset" trick -- hold down the off button for about ten seconds with everything disconnected, which effectively causes the unit to fully shut down. I'd tried that already, done all the other obvious checks that he could think of and had a clearly good pack hooked up. He was at a bit of a loss and offered that perhaps one of the AVR, automatic voltage regulation relays had welded itself in a wrong state. These units have buck/boost capability as part of their power conditioning when online, which is done via relay-switched transformer taps. Kind of an odd area to suspect considering the last thing that had happened was a normal shutdown from working, but I duly ohmed them out and found all the relays relaxed in their expected off state. Fortunately I was able to have this conversation without opening a ticket and spewing all kinds of serial numbers and personal information they wanted to ask for. Next time I hear some front-liner say "but the computer won't let me ..." I swear I'm going to reach right through the fiber pipe and strangle them. With the decidedly nontrivial investment in this unit's new batteries still fresh in my mind I didn't want to just give up on it. The house already has enough heavy, non-functional bodankers kicking around it; I don't need more. I *use* my UPSes, whether they go on the road or support things on the home front. I really needed a schematic at this point, which was naturally not forthcoming from APC, and applied various google-fu looking for additional hints from people who might have dealt with the same sort of thing. Finally on some obscure forum there was a short post on a repair-related topic exclaiming "jackpot!" and pointing to a useful little site in .RU somewhere. (Go web-whack its content for yourself before it gets taken down!) Now armed with the 1400-class and 2200-class documentation, I could continue my study. One puzzling observation was that the board traces coming from the two little auxiliary transformers are *tiny* and run a long way up to the logic section. These and the transformers themselves don't look like the kind of hardware that's going to handle a few amps of charging batteries. In the picture above [use the large one], they're the two small blocks just southeast of the large blue filter caps on the main board. There aren't any of the big high-current rectifiers one would expect to find, either. The schematic confirmed that not only is there no specific charging or auxiliary-power section from the wall; all the logic and control power is derived solely from the *battery* side of things via a small fused connection that I had missed so far. [This is why all such units need a pack connected to work at all.] |

|

This picture also shows a hint that the parts to the left of the fuse

may have been running a little hot, as the white part-number paint is

a little discolored. Remember, the board runs with this surface upward

and the parts hanging down, so heat would rise straight toward the board

and accumulate here. I didn't see any cooked or dry solder connections

around the area and there weren't any obviously-roasted parts topside,

but it was another one of those "tech eyeball" things to take note of as

symptomatic.

I also needed to have the pack voltage present, so just for initial testing it got carefully alligator-clipped into its connection points from next to the board instead of being fully reinstalled -- *with* the understanding that if the unit tried to draw heavily on the battery, those clip-leads could turn into big flamin' fuses themselves. Whatever. It would be enough to run the electronics, and one thing I wanted better control of was that big fat spark you get on plugging the battery into its normal connections as the filter caps are *right* across it switched only by your insertion of the Anderson connectors on the pack. With everything exposed like this I could insert a hundred-ohm or so resistor briefly to let the caps charge slowly, and then hook up for real. Most UPS designs don't bother accomodating for this because then you'd need a precharge resistor and a high-current battery relay with arc suppression; it's usually easiest to wire straight into where the power will be used, and assume that the big Anderson connectors can take a high-current zap or three over their lifetime. Hmm. Fifty volts or so supplying something downstream; that's got potential for a lot of power dissipation. Remove all jewelry and watches, remember? I briefly dropped a largish 100-ohm power resistor across my zip-cord and felt for heat, as the half-amp max into a shorted load would have produced 25 watts. But I didn't see any sparks or feel anything ... clipped it back in, waited in vain for smoke to emerge from somewhere on the main board, and then reached over and fingered the on-button and was rewarded with a snappy "ta-click! chunk" as a couple of relays tried to engage. There was also a familiar flash in the front panel LEDs. Holding the button down caused this cycle to repeat by itself, so obviously my 100-ohm fake fuse was supplying the brains of the unit but not with enough to keep it thinking. I substituted a 10-ohm resistor and added an ammeter inline, and on a button push the relays in question came on and held. I could then hear a soft whine from the main board -- the type of subtle change that tells the aware technician "hey, it's running!" and makes all the difference between theoretical and practical approaches to troubleshooting. Meanwhile I was hawking the board against a bright worklight watching and sniffing for the magic smoke a-risin', listening for anything frying itself, running my hand over everything to feel for heat. Nothing, and according to my ammeter it wasn't pulling much at all -- maybe 100 mils or so. Faking across the fuse seemed to have brought back normal standby operation of the UPS, and to finally give full current capability across that connection but still protect it I found an ancient panel-mount circuit breaker in dusty old stock claiming to be rated at 2 amps or so, less than the spec 5 but more than my observation of real-life draw. Now my zip-cord kludge connection had that and the ammeter hung off it, at a comfortable distance *away* from the rest of this scary lashup. |

|

This unit has two of the large transformers that are found in nearly every

larger Smart-UPS class unit. Their nominal voltage is somewhere

between 18 and 24, and the low-voltage inverter

primaries are simply hooked up in series for a 48-volt system and the

HV secondaries connected in parallel. This allows for essentially a

single control-board design for the entire model line, with a few parts

added and some thresholds reprogrammed to scale up. In fact this one, a

1400 VA or ~ 900W rated unit, only has half the output transistors installed

at the inverter heatsinks, where a 2200 VA unit would have more of them

and a 3000 VA unit would have a fully-populated rack. Interestingly,

though, the rest of the board here seems to match the 2200 schematic much

more closely, particularly in part numbers, than in what's given as the

"1000/1400" schematic. Still, gaining understanding of one particular

model is an education in how any of them work.

The big transformers are an integral part of the unit's operation. Unlike cheap "back-ups" type units that just click over to inverter operation on line failure, these are always in the loop even when the unit is on wall power. Taps on the high-voltage side can be switched in and out to correct for high or low input voltage, with appropriate "boost" or "trim" indications lit on the front panel. That appears to be done passively, as it's a simple approach using relays. What I didn't know before starting this is that the low-voltage side and inverter section are always running in lockstep with the AC line, not necessarily *producing* power under normal on-line operation but in fact taking some out of the system to charge the battery. The inverter is a full H-bridge built from IRF3710 HEXFET devices which have internal reverse diodes, so it is likely parasiting a little energy from the transformer and doing a similar flyback trick to what a Prius does to produce charging voltage much higher than its motors would natively generate. In fact, trying to run one of these units without the transformer high-side leads plugged in produces some really nasty noises, and should clearly be avoided. The small transformers on the board are for the most part used to bring independent isolated half-wave line voltage references to the control circuitry, where the internal sinewave reference that gets clocked out of RAM can be phase-matched and continually checked for deviation that would trigger going to battery. Even under inverter operation the high-side output is used as feedback into the driver circuitry to shape the output sinewave, and in fact to APC's credit the Smart-UPS line has some of the *cleanest* sinewave output I've seen, pretty much up to the maximum ratings. At least into resistive loads -- I haven't done a lot of testing with high direct-rectified loads or big motors. It gets a little wiggly and starts flattening as the battery nears depletion, but that doesn't bother most of the things you'd be powering. But my diagnosis still wasn't out of the woods, as I didn't know what killed the fuse and hadn't yet tried on-battery operation. What I did notice after a short time on-line was that the parts near the ex-fuse and discolored paint were a bit warm -- not destructively so, but warmer than I thought small transistors and resistors should be. And the warmest areas matched the pattern I could see in the paint. Running the board tipped up, I could feel the same heat in the backside and it was clear that this had been going on for a long time because paint generally doesn't discolor like that from a single short event without there being evidence of much more widespread destruction. My fuse-substitute was still showing the same safely low supply current, and I began to wonder if this was normal. My old 2200 produces a little heat at certain areas of its top cover, but I think that drifts up from the transformers. However, high ambient heat levels often can make fuses falsely or prematurely blow, as they operate on the principle of heating anyway. So I began to suspect that a generally warm area on the board had taken this one out over time and that perhaps nothing was really wrong. The discoloration around the area, particularly on the copper and masking near the fuse, makes it look potentially a lot worse, but this unit was in service for quite a few years before I got it. |

|

Just looking at this I had no idea why any of it should be running hot,

powered by just 12 volts. The transistors are supposed to be good for half

an amp apiece [pretty impressive for little TO-92 packages, I have to say]

and they're coupled in a way that should prevent both of them passing

current at the same time. The whole little group is just feeding gates

of the MOSFET output array, which while that's a relatively low-impedance

capacitive load being

switched at high speed it shouldn't be *that* much of a burden. The similar

driver section farther down on the same page run similarly warm, so without

seeing an obviously out-of-spec part in one place I began to think that

maybe this is just how the thing runs. The low-side driver feed components

are a little less warm, but certainly not stone-cold either. Hard to believe

that such a quirk would have escaped notice by APC's lab guys, however.

| ||

|

Nonetheless, it did, and for many years. See the resolution page for the final diagnosis and fix! |

||

|

Long-term hot operation could cause something to fail, and if for example some piece of this were to go shorted in a way that left the main FET gates on, disaster would clearly strike on the next half-cycle. Perhaps that's what happened to this poor fellow, and if your understanding of Polish is any better than google-translate's you can probably get a better idea of how careful he was trying to be. The post shows a picture of the same board area which looks quite a bit more cooked than mine. Or maybe it wouldn't blow up, and the system would protect itself a little better than I give it credit for. Amusingly, pressing harder on some of the nearby connections to feel heat with the backside of my finger made the whole unit abruptly shut down, as my skin conduction likely upset the signal balance into some of the sensitive high-impedance parts in the chain. That told me that the circuitry is nominally smart about error detection; if it can't track a valid sinewave correctly then it does the right thing and immediately gives up before something bad happens. | ||

| The unit then ran on battery just fine too, and switched back and forth between wall and battery just like it should. Interestingly, the hot parts *cooled down* when the unit was running on battery so evidently the excess heat is only something having to do with running in charge mode. I reproduced that fact several times while testing all the various modes. But for all intents and purposes, the whole unit was working again. |

|

There, I fixed it. |

|

This little repair comes with the caveat that the full 50+ volts of the

battery pack appears at the fuseholder connections, now accessible to a

hand stuck into the hole. This unit is heavy enough that it's often

convenient to use that hole as a handle for moving it around, as it's

one of the few non-sharp parts to grab around the back end. That's

one reason I mounted the holder where it is, so that I could still grab

the *outer* part of the hole for schlepping the brick. 48 or 50 volts

is getting up near the thresholds where people with moist skin can

actually get a perceptible shock, and if I wet my finger and press hard

I can feel it too. The hybrid and EV crowd generally says 60V is where

you have to start being more concerned about electrocution hazards; on

the other hand there are those who say you could kill yourself with a

9V battery and two open hand wounds. So I'll slap a little tape over

the fuseholder when the unit goes on the road. It's still easier than

it would have been to find and mount a suitable mini-breaker someplace.

Interim decisions I guess the inverter pre-driver will just continue on being warm for the time being, as I'm not up for trying to really dope out why it is or rebuild that whole area of circuitry with beefier parts. If it fails again, at least I know about where to start looking and now you the gentle reader do too. Someone else might read this and start by scoping across R38 to figure out how much average power it's trying to dissipate under different UPS operational conditions and whether we just need bigger parts or if there's a more fundamental problem; I may attack this someday myself and if I get around to it, there will certainly be an update here. If all the SmartUPS family control boards have this issue, I must only conclude that it's an inherent design flaw in the product. In digging around a little further I discovered that other users have observed similar symptoms and tried to call out APC *on their own hosted forum*, but despite several posters chiming in "me too" APC had basically blown them off. It seems likely that this has been a problem since 1996 or whenever and only recently are units starting to fail more often from the long-term component stress. If yer asks me, APC or Schneider or whoever they are this week needs to take a little more responsibility for this sort of thing. While their legalese specifically deflects any accountability for life-safety situations, a lot of people rely on their UPSes for business continuity and don't expect them to just randomly fail from low-spec parts choices. 2013: Full circle About a year and a half after this original page went up I had an opportunity to get final resolution on the problem, straight from one of the original APC company founders. See part 2 for the entertaining story! In the process of researching around I found some generally interesting sites that discuss UPS issues and diagnosis. APC Discussion Forums : Network & Server UPS |